Over the past few decades, an epidemic that has spread across the globe has been quietly brewing. Specifically, mental health problems around the world have begun to grow exponentially and their catastrophic consequences have begun to attract significant attention.

SAMHSA had released a groundbreaking report in 2020 that highlighted the devastating effects caused by M/SUD. SAMHSA's analysis found that "M/SUD treatment expenditures from all public and private sources are projected to reach $280.5 billion during 2020, up from $171.7 billion in 2009."

What's more, mental health problems not only place a heavy burden on the patients themselves and take an immeasurable toll on their families and care providers, but also cause countless people to say goodbye to the world forever. In fact, a purely monetary or economic analysis is simply not enough to quantify the devastation that mental health disorders cause on both a physical and emotional level.

Fortunately, people have begun to plan new remedies for mental health and to explore additional treatment options through significant innovation and investment. One of these novel concepts is the application of artificial intelligence in the mental health field.

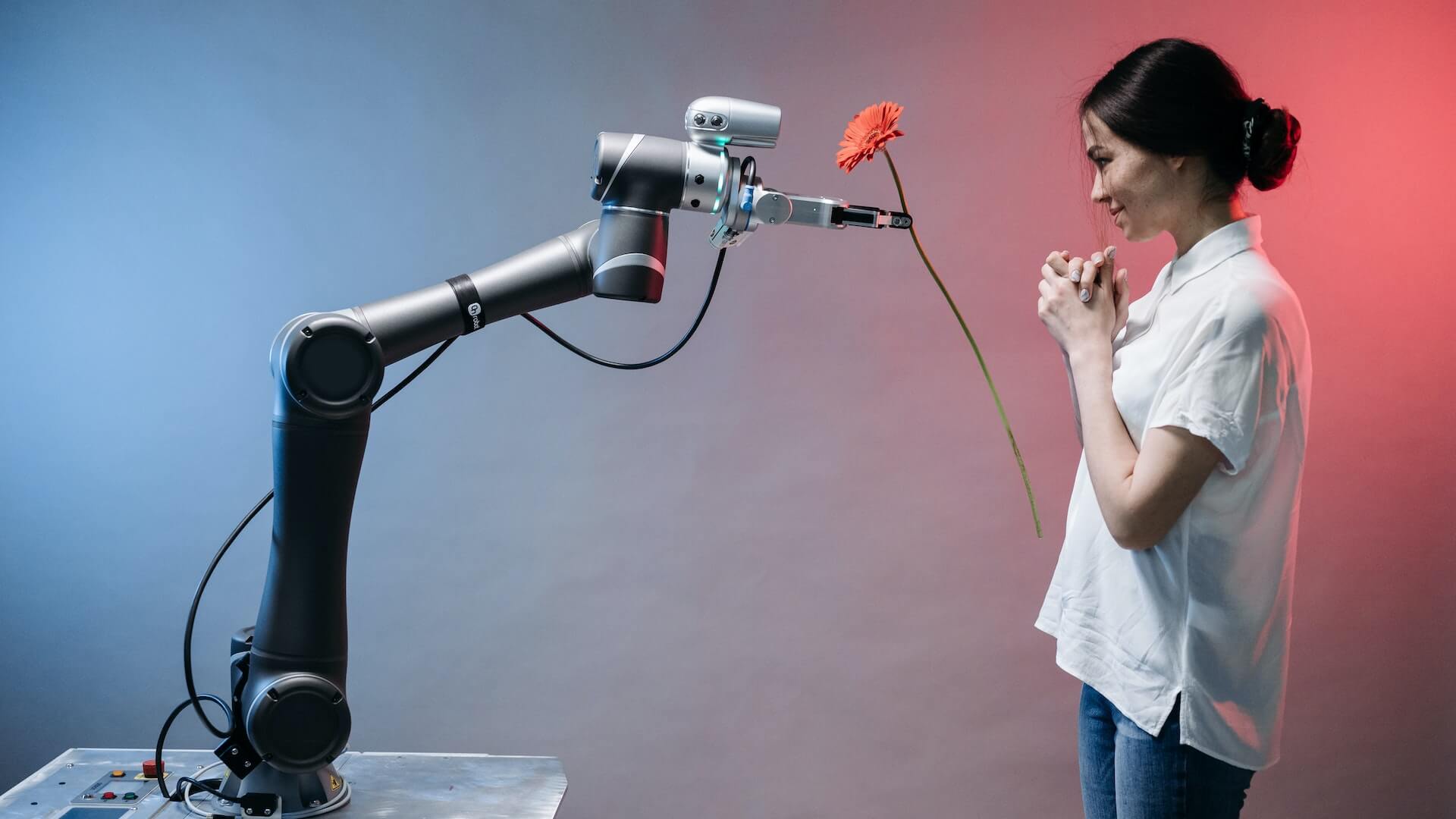

With the advent of generative AI, conversational AI and natural language processing technologies, the idea of using AI systems to accompany humans has begun to move into the mainstream.

As a pioneer in the development of scalable AI solutions, Google Cloud has been deeply analyzing the definition and possibilities of conversational AI: "Conversational AI would combine natural language processing and machine learning, trained using large amounts of data, such as text and speech material. This data will teach the system how to understand and process human language. Later, the system uses this knowledge to interact with humans in a more natural way. It will continue to learn from the interaction and further improve the quality of its response over time."

In other words, with sufficient data, training and interaction, these systems will not only be able to reproduce human language, but may eventually even be able to use billions of data points and evidence-based guidelines to provide medical health and treatment options to their audiences. There is no doubt that tech giants such as Google, Amazon, Microsoft and others have already dropped billions of dollars on this technology as they realize that current AI technology is just a few steps away from mastering human language and conversational skills. Once an enterprise is able to complete the last few pieces of the puzzle, unlimited potential will be unlocked from it: everything from customer service to emotional companionship to interpersonal relationship maintenance can be efficiently run with AI as the driving force.

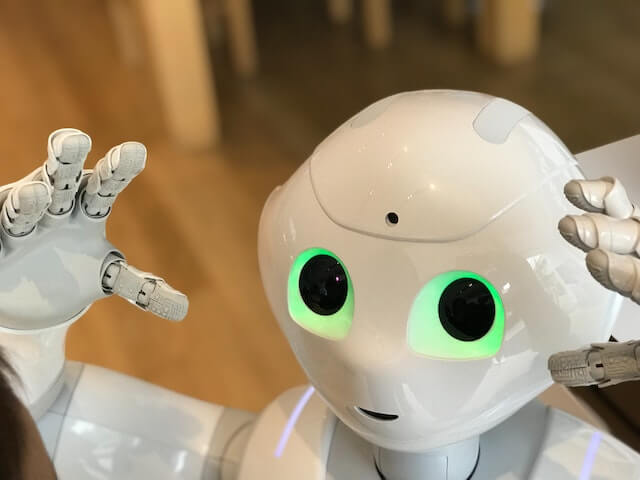

The AvatarMind iPal social companion for the care and companionship of children and the elderly is shown at the booth at CES 2017 at the Sands Expo and Convention Center in Las Vegas, Nevada, on January 5, 2017. The robot, which dances, tells stories, plays games and even listens to music, has 25 motors and is able to make movements similar to those of humans. Parents can use their cell phones to control it remotely, monitor where their children are, what they are doing, and video chat whenever they want.

However, this incredible technology also raises a number of potential concerns. While AI holds the promise of alleviating inequality, providing health care in a convenient way, and even providing companionship to those who need it most, its development must be accompanied by appropriate guidance and constraints for a number of practical reasons.

For one, patient privacy and data security will be critical in such a sensitive area as mental health. For AI technologies that take on the role of companion and require the collection of large amounts of sensitive information, developers must ensure that this data is never compromised, that patient privacy is always a top priority, and that they are able to continuously defend themselves against increasing cybersecurity threats.

In addition, some have raised more pragmatic concerns: just how far should humans go down this road? While AI certainly offers a number of benefits, innovators should also be cautious about the limitations of these systems. It is important to note that the merits of the systems will depend on the models and data sets they use as learning objects. So once in the wrong hands, these systems can easily provide incorrect, or even dangerous, advice to vulnerable populations. Therefore, companies must build strict practice control strategies around responsible development.

Finally, from a society-wide perspective, the use of AI systems to address mental health issues and autism also sets a dangerous precedent. Currently, no system has been able to reproduce the intricate elements of humanity, interaction, emotion, and feeling. Healthcare leaders, regulators, and innovators must keep this basic premise in mind and prioritize viable and sustainable measures to address the mental health crisis, such as training more mental health professionals and increasing patient access to care.

In short, whatever the ultimate solution may be, we must act now-otherwise, this psychological-level epidemic will rage beyond control.